Found is a virtual obesity treatment platform offering medication management, provider care, and membership-based access to treatment. The sign-up funnel was the primary entry point for new patients, and it was underperforming in ways that were hurting both conversion and long-term retention.

The funnel wasn't losing people because the price was too high. It was losing them because the price they saw at sign-up wasn't the price they paid.

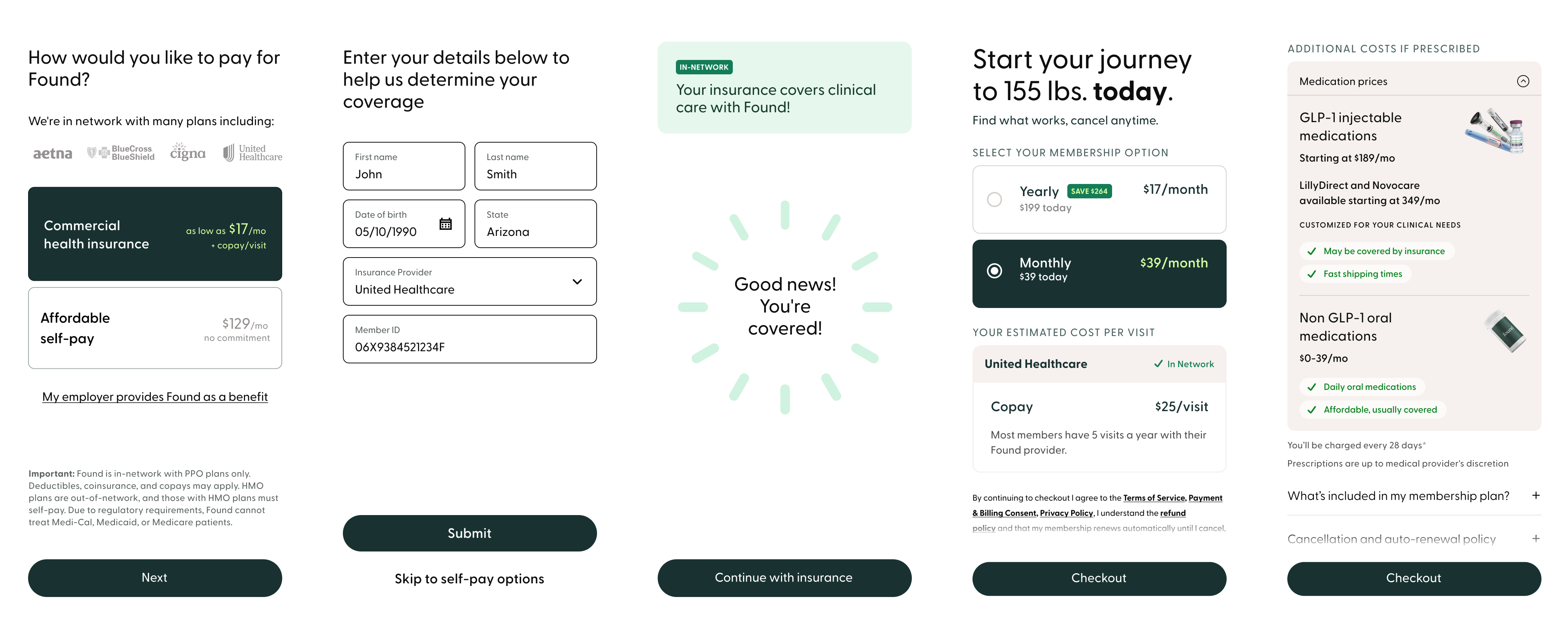

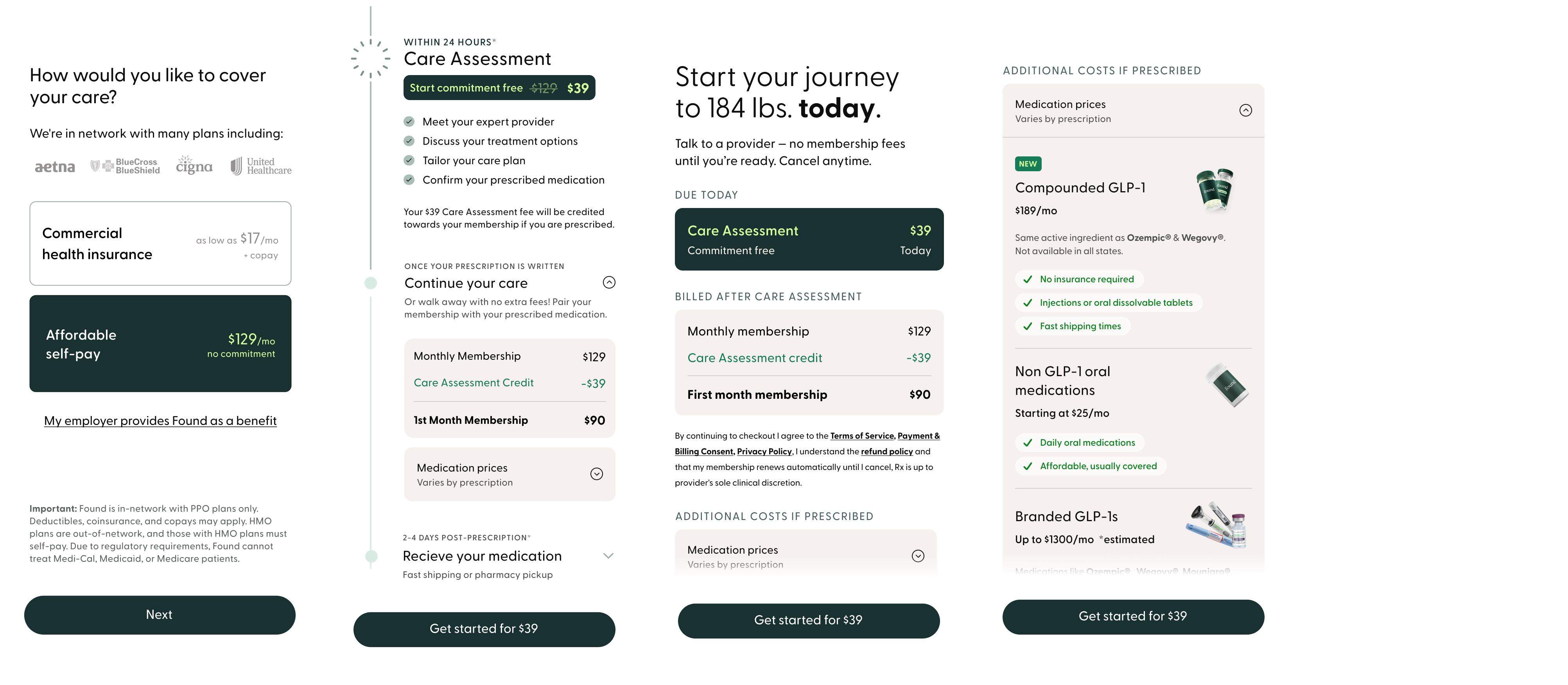

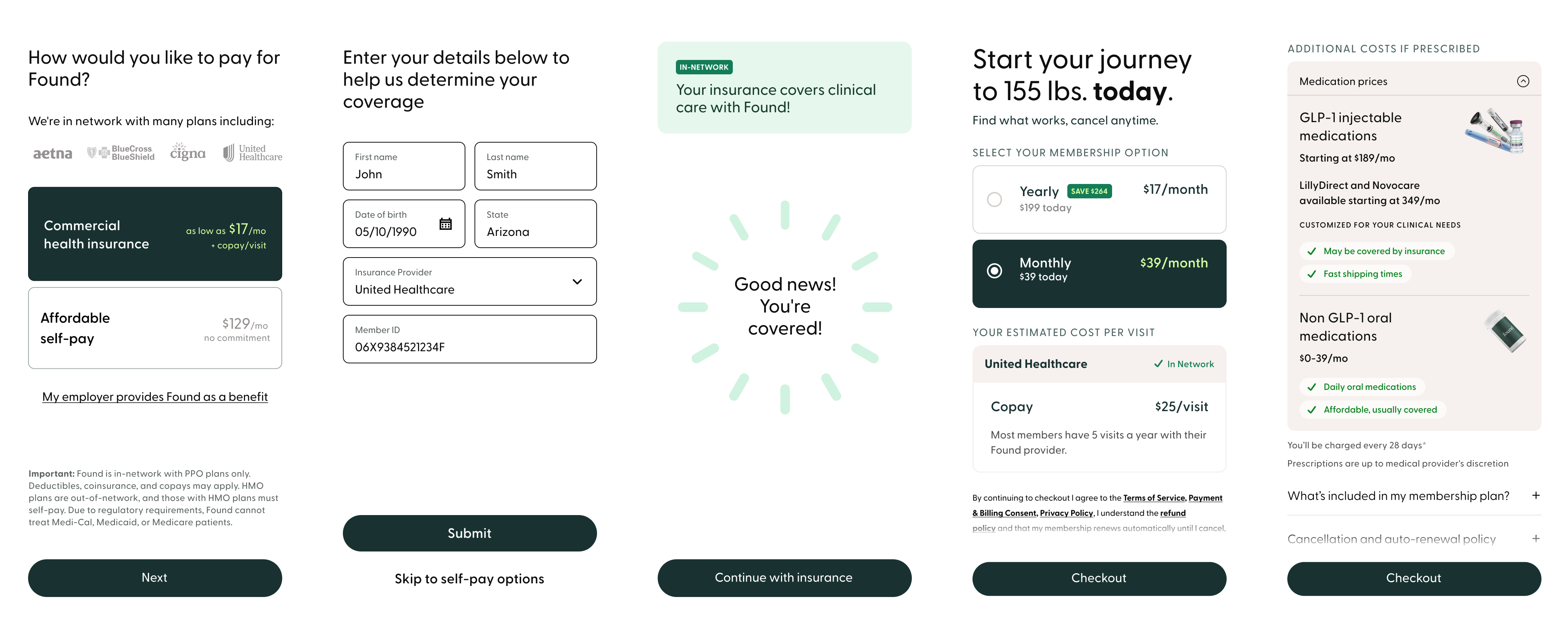

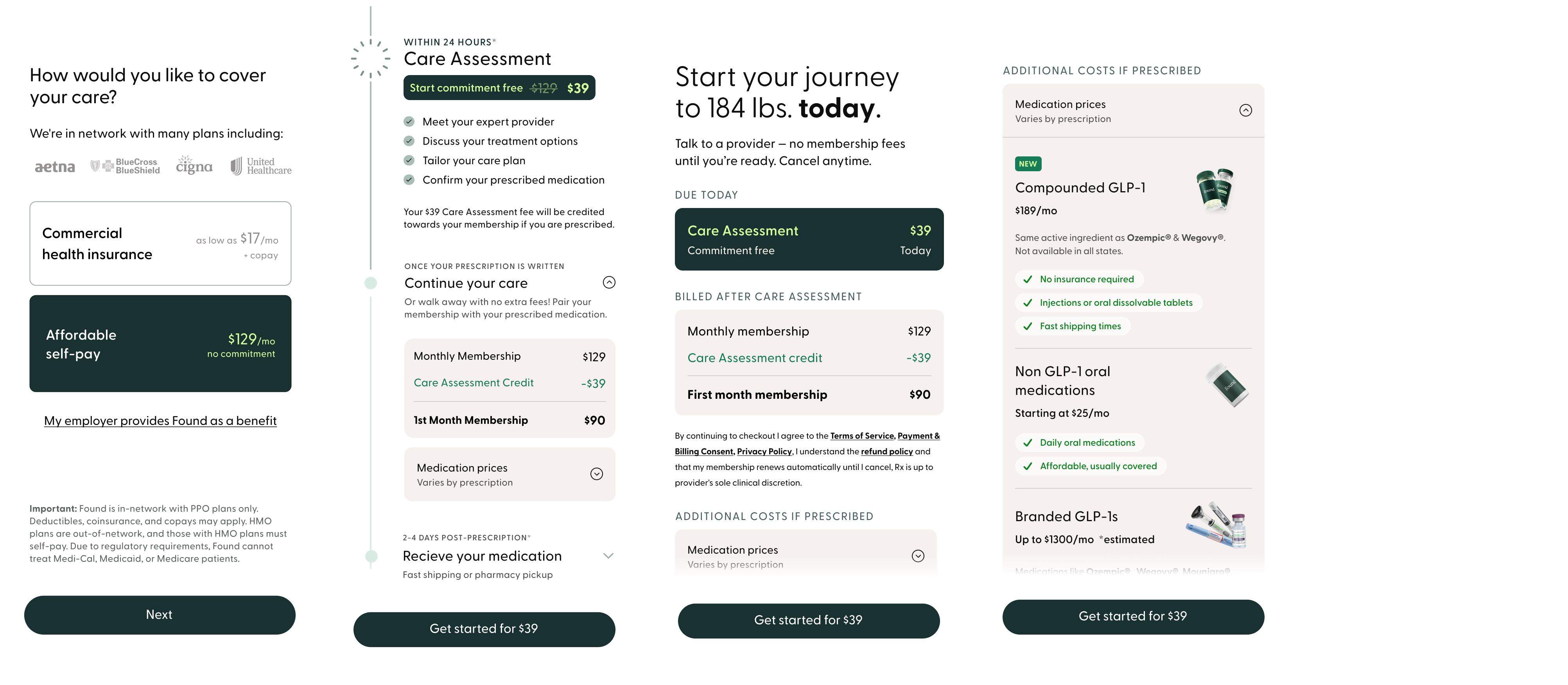

Found's pricing model was genuinely complex. Beyond the membership fee, patients might owe insurance co-pays and medication costs, each with different billing cadences and eligibility conditions. But the sign-up funnel surfaced only the subscription price before asking users to commit. Three structural failures were driving it:

Research confirmed the core problem. Through a usability study with recruited participants matched to Found's clinical profile, we found that the pricing step wasn't failing because the subscription cost was too high. It was failing because the subscription price was the only cost users saw before committing. For some, additional costs pushed the total outside their budget. For all of them, it felt like a breach of trust.

Pricing confusion was dragging down LTV and putting pressure on CAC. But the org was hesitant to surface costs transparently, worried it would depress conversion. I reframed the challenge: transparency wasn't a conversion risk. It was a prerequisite for sustainable growth.

"If it was covered by insurance or if there is a monthly fee, knowing that upfront before we went through all these forms would be better instead of finding out sixty minutes after I fill out all that stuff that it's too expensive." — Usability study participant

The internal resistance was real and reasonable. Marketing and Product were concerned that introducing more friction into the funnel would cost conversions. And there was a concrete financial objection: running real-time insurance verification would cost approximately $7 per user, a meaningful addition to CAC at scale. The verification capability already existed in the product. The question wasn't capability. It was whether moving verification earlier in the funnel was worth the cost and the risk to conversion.

I championed a phased testing approach to address that concern. Rather than asking the org to commit to a high-cost, high-friction change on faith, I structured the work across three phases with clear gates before any rollout.

The two experiments targeted different segments with different cost problems. Insurance users needed coverage clarity and co-pay confirmation upfront. Cash users needed to understand the full picture before subscribing. Both shared a constraint: medications are prescribed after sign-up, so the funnel couldn't guarantee specifics. These distinct problems required distinct solutions. Both came back to the same thesis: you cannot build retention on information you withheld at sign-up.

Both solutions came back to the same idea: give people enough information to make a confident decision before they commit.

Both paths included an expandable medication pricing section on the plan page. The sign-up funnel couldn't promise any user exactly what they would pay for medication. That decision is made by the provider after the care assessment. What it could do was surface the range of available medication types and their costs, so users arrived at checkout knowing a medication cost was coming. No surprises at the first billing cycle.

The $7 per-user cost of running the insurance verification was the central business objection. I made the case that a subscriber who converted with accurate cost expectations was worth substantially more in LTV than one who converted on an incomplete picture and churned at first billing. Both solutions were designed, prototyped, and A/B tested before any recommendation was made to scale.

The data validated the thesis. But the outcome was more nuanced than a simple win.

When the new flow rolled out fully, the team anticipated a roughly 10% decrease in raw conversion volume as an expected tradeoff. Showing users real costs upfront meant some who would have converted on incomplete information no longer did. That was the point. The subscribers who did convert were higher quality, with accurate expectations and a significantly higher likelihood of staying beyond Month 1. Conversion volume was flat, but what conversion meant had changed.

Translated to scale, the Week 1 retention lift represented around 3,000 additional retained users per 25,000-subscriber cohort. The $7 per-user verification cost paid for itself many times over in reduced churn and improved LTV. Leadership committed to continued iteration in Q1, with the transparency-first approach established as the new baseline.

The Assessment First path told a different story. Results for cash users didn't produce a meaningful lift, and we didn't pursue a full rollout. What the test did produce was signal: the friction of an upfront care assessment wasn't the blocker we hypothesized, but the underlying problem of cost uncertainty for cash users remained unsolved.

The organizational shift mattered too. This work reframed how the business thought about the relationship between pricing transparency and growth. The Assessment First signal directly shaped the next round of testing. Staged subscription models explored as follow-on work carried that thread forward.